Shiyi Cao 曹诗怡

I am a third-year Ph.D. student at UC Berkeley EECS, advised by Ion Stoica and Joseph Gonzalez, affiliated with Sky Computing Lab and BAIR.

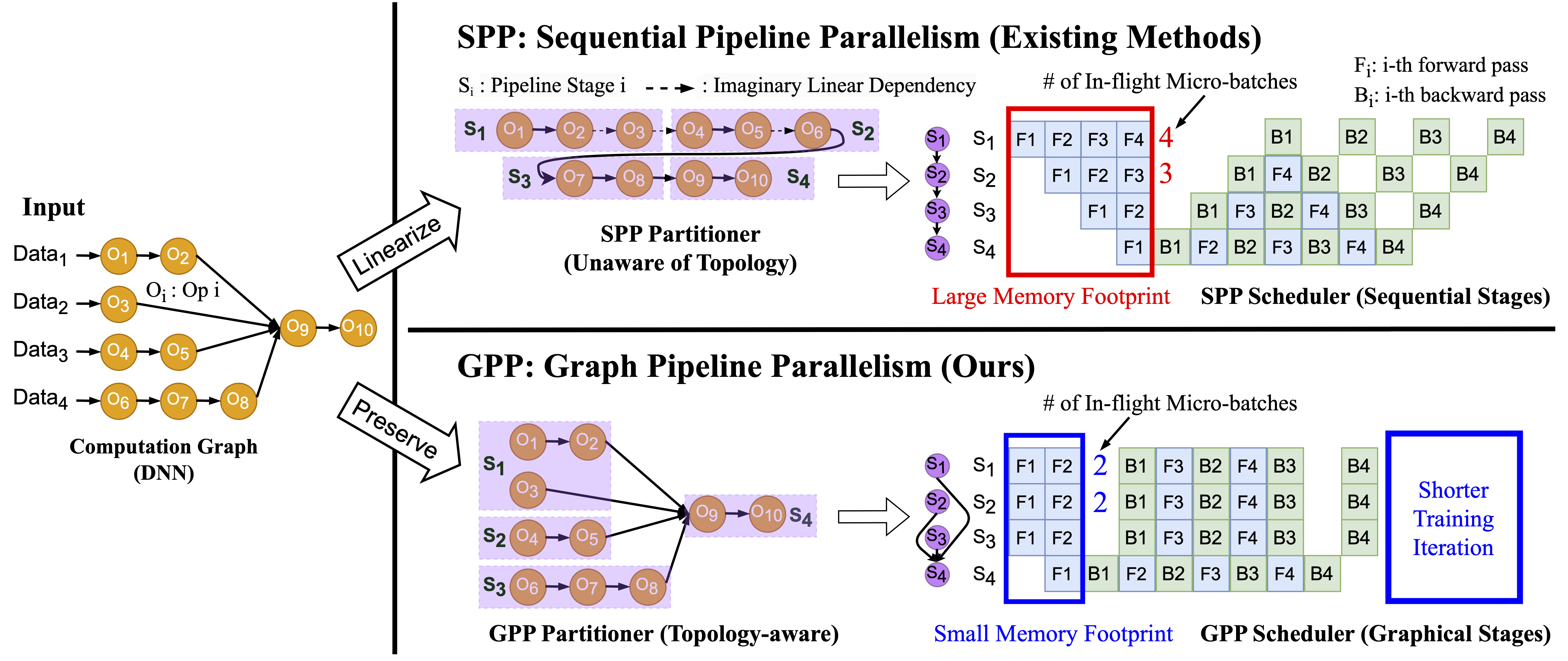

Previously, I was fortunate to be working with Zhihao Jia at CMU on accelerating distributed training. I obtained M.S. in Computer Science at ETH, working at SPCL with Torsten Hoefler. Prior to joining grad school, I had a great time at Shanghai Jiao Tong University where I earned a bachelor's degree in Computer Science.

I am mainly interested in automated optimization of ML computations on large-scale heterogeneous hardwares.